Important Factors Learnt from Helen Keller which Today’s Natural Language Processing Lacks of 4

Helen before having acquired language skills, would often lose her temper once things didn’t go her way. Whenever she was upset,...

AI that beats world champions of go and shogi has appeared through Deep Leaning.

But that AI wins only go and shogi matches.

That AI can do only one kind of job.

That AI is called Narrow AI.

What we need is AI that can do whatever we ask to do.

Nonetheless, we don’t want to ask AI to do difficult jobs such as beating world champions of go or shogi.

What we want to ask AI to do are easy jobs that any and every human being can do.

Asking to answer to simple questions or asking to be a talking companion just a while.

AI that can do anything as a human being does is called AGI(artificial general intelligence).

AGI is the very technology that is needed now.

It’s said that the Singularity will be occur when AGI is created.

A very high wall confronts creating AGI, though.

AI that can do whatever we ask to do as human being does.

Why can’t we create that kind of simple AI?

When a person asks someone to do something, he/she uses language (natural language) to explain.

Someone who is asked to do understands meanings of the language and does as explained.

Actually, AI can’t do it.

Precisely, AI can’t understand yet what “understanding meanings of languages” is.

ROBOmind-Project has developed a model of a mind that is the same as human being’s to understand meanings of languages.

ROBOmind-Project has developed a system that gives computers consciousness .

ROBOmind, Inc. is one and only company that can realize “understanding meanings of languages”.

Helen before having acquired language skills, would often lose her temper once things didn’t go her way. Whenever she was upset,...

Mrs. Sullivan spelled the word “w-a-t-e-r” on Helen’s palm countless times while letting the water from the well touch her othe...

Helen Keller, who had been raised spoiled for being blind, deaf and unable to speak. Mrs. Sullivan struggled to teach her words...

I first watched “The Miracle Worker” by Helen Keller on a movie screening day at my primary school. At that time, dubbing was yet...

Today’s post will look at what functions are necessary for a brain to function from the book “An Anthropologist on Mars” written by Olive...

In the last post, we did a psychological problem called ”the Wason four-card problem” in order to reveal differences be...

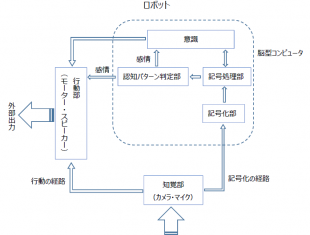

Today’s post is going to consider necessary functions for making the same brain as humans on computer. I invited Mr. Yamada as ...

When I came to be a human again from being a zombie at midnight in the residential area, I managed to come back to my house witho...

The next thing I knew, I was wandering around the city at night. I suppose I became a zombie temporarily from being bitten by a zombie,...

Kobe, the city I live in, has many old Western houses around the city. It is said that zombies of the olden days still reside in...

I first watched “The Miracle Worker” by Helen Keller on a movie screening day at my primary school. At that time, dubbing was yet...

Helen Keller, who had been raised spoiled for being blind, deaf and unable to speak. Mrs. Sullivan struggled to teach her words...

Mrs. Sullivan spelled the word “w-a-t-e-r” on Helen’s palm countless times while letting the water from the well touch her othe...

Helen before having acquired language skills, would often lose her temper once things didn’t go her way. Whenever she was upset,...